I demoed some of my testing to Maaret Pyhäjärvi the other day. She asked a bunch of incisive questions, offered some helpful ideas on framing my results, and then suggested that I should consider speaking and writing about how I partition the testing space when I work.

... I learn so much hearing how [James] dissects the exact necessary information and combines it with an approach that provides better results than what is usual for testing.

Which, naturally, made me blush, but also reminded me that others have observed similar things. Back in 2016, during my presentation at MEWT #5, Iain McCowatt tweeted this:

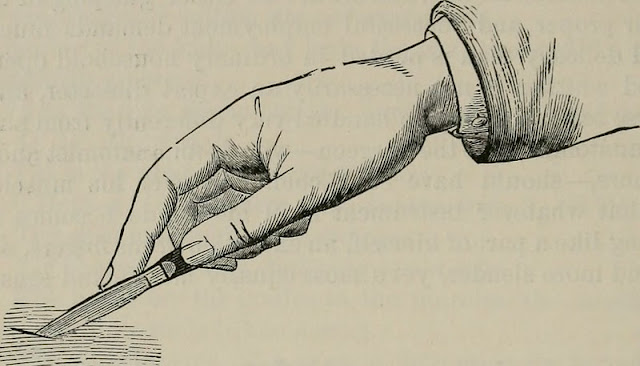

[James] wields distinctions like a surgeon wields a scalpel.

So now I am wondering about how I can begin to externalise more of this aspect of my approach to testing.

I don't have the answer yet. Today's task is to collect some data on what I've said in the past. I'm listing it in the order I found it, with some commentary.

I have written and spoken a lot about how I test already although I would

guess it's largely in the context of specific projects. Off the top of my

head,

How To Test Anything

is more generic than others but, interestingly it doesn't talk specifically

about this topic.

However, a term that comes to mind immediately is

factoring. I searched for a definition of it in software testing and

came up with

this post by Rikard Edgren

which also uses the term fractionation after Edward de Bono in

Lateral Thinking.

I have read Lateral Thinking and recall that I wrote some notes about it. Skimming them now, I see that I didn't pick fractionation out, but I did say this:

Over the years I've come to value up-front exploration ... to build a model of the problem space before implementation. Frequently, even where the budget for a project is tiny, I'll try to isolate some time, however small, for it.

That post also mentions humour and triggers the thought that I mentioned factoring in my first talk, Your Testing is a Joke. This is from the paper that went with the presentation:

... I will often pick out ... entities and then look for concepts ... related to them ... and then see whether any of those concepts could link to one another or something related. I call this expansion and it feels like a close cousin of factoring. But where factoring is a process which seeks to list the intrinsic dimensions of a thing that may be relevant to the task in hand, expansion additionally looks for more general properties, instances, sub-parts, synonyms, antonyms and other related terms.

I had forgotten that I called this expansion at one point.

As I move through this thread of thoughts and think that I might have something to say about it, The Dots popped into my head. It seems like, as so often, I have already said something about it.

Side note: one of the reasons that I find blogging valuable is that I can

search and see what I think, or thought.

In this case, I still think idea strings are relevant to the topic at hand: an idea leads to an idea leads to an idea, or more than one idea. The challenge, as in testing, is to decide which thread to pull, and how far, and when.

In this case I didn't pursue that line further. I instead searched Hiccupps for other mentions of factoring and found a post I'd forgotten about, The Anatomy of a Definition of Testing. If you take a look at it, you can see that I've tried to group ideas together to understand the space I'm looking at and then chosen a piece of it to attack. That's an example of exactly the concept we're talking about.

Tracking back, that post comes from another, Testing All the Way Down, and Other Directions where I said:

I am testing the definition of testing in [Explore It!] using my testing skills to find and interpret evidence, skills such as: reading, re-reading, skim reading, searching, cataloguing, note-taking, cross-referencing, grouping related terms, looking for consistency and inconsistency within and outside the book, comparing to my own intuition, reflecting on the reaction of my colleagues ..., filtering, factoring, staying alert, experimenting, evaluating, scepticism, critical thinking, lateral thinking, ...

That may also be the first post where I talked about the non-linearity inherent in our work. We rarely discover all that we need to know at the time we need to know it in the order it makes most sense that allows us to get to some mythical right answer.

I'll stop there for now. It's nearly tea time and I feel like this is a good start. I have identified some points in the space I'm considering and some material I can review in more depth if I want it.To be honest, I like to leave a task at this point and let my subconscious work on it for a while. What else do I already know here? What ways can I classify these things? How could I connect these ideas? How could I rearrange these things to make a contradiction?

I also like that I've captured something of the way I work here, so this post is itself an example of

the thing I'm trying to think about. As I write that, a phrase arrives in my fingers: collect, arrange, slice. I don't know quite what I mean by it yet, but I'll keep it with the rest for now.

Image: https://flic.kr/p/owvFxg

Edit: I later wrote Collect, Arrange, and Slice.