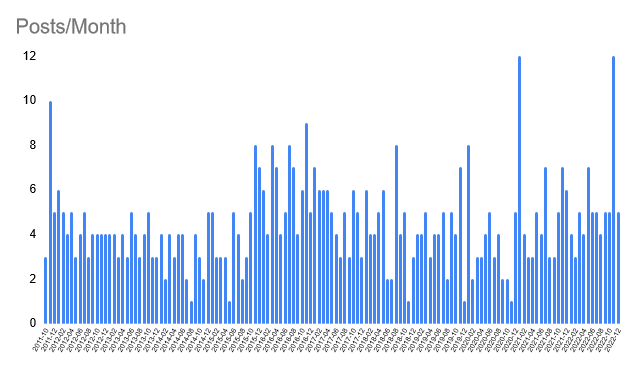

That's my 599 blog posts from October 2011 to December 2022.

Well, it's one view of them, a view that demonstrates that I show up

and I ship. For me, these are useful, satisfying, and creative acts.

I like Seth Godin on creativity. In an interview with Thought Economics

he says:

What it means to be creative is pretty simple. It’s to do something human, something generous, something that might not work.

Tick, tick, tick ... I hope.

Godin is also interesting on achieving success. I don't know whether I'd say Hiccupps is successful in any objective sense. Godin's take is that you have to show up first:

Commitment to the process, the practice and the method comes before the success.So, success? I wrote a music fanzine when I was younger. More than once it was described as the zine-writer's zine. Looking for a reference to that, I found this: "... zines that stood out, such as Robots and Electronic Brains [did so] because of the author’s deliberate use of the zine format [to insert] a personal aspect into their writing of the very subjective topic of musical taste."

I think perhaps I've done the same here, around testing. I'm not covering the same

topics as everyone else, unless they happen to be topics that interest me

right now. I strive to be myself in these posts because

I learned the hard way that giving myself permission to be me unlocks

more pleasure in the work and, I think, better outcomes (for me).

So, success? I get enjoyment from writing, writing helps me explore ideas, and I love exploring ideas. But why so many posts? This is what

Linus Pauling said

when asked how he had so many good ideas:

Have lots of ideas and throw away the bad ones.

Posting an idea as I currently have it helps me to challenge it, develop it, and connect it to other ideas.

I had a short excursion into ideas themselves a few years ago, and found that a helpful tool for creativity is to regard all ideas as bad. Why? Because then there is always space for improving on them.

This was inspired by Alan Dix's paper,

Silly Ideas, where he said:

By playing with bad ideas you can get to understand a domain in a way that no good idea would let you.

Which, unexpectedly, prompts a question from me to me:

Is it still a good idea to have a blog?

In my 600th post, this is why I love blogging.