A month or two ago, after seeing how I was taking notes and sharing information, a colleague pointed me at Tiego Forte's blog on Building a Second Brain:

[BASB is] a methodology for saving and systematically reminding us of the ideas, inspirations, insights, and connections we’ve gained through our experience. It expands our memory and our intellect...That definitely sounded like my kind of thing so I ordered the upcoming book, waited for it to arrive, and then read it in a couple of sittings. Very crudely, I'd summarise it something like this:

- notes are atomic items, each one a single idea, and are not just textual

- notes should capture what your gut tells you could be valuable

- notes should capture what you think you need right now

- notes should preserve important context for restarting work

-

notes on a topic are bundled in a folder for a Project, Area, or Resource

and moved into Archive when they're done. (PARA)

-

activity around notes has four stages: Collect, Organise, Distill, Express

(CODE)

- notes, and topics, will need to be curated over time

- notes effectively sub-contract memory to a third party

- notes are building blocks for your work

- notes make space for synchronicity and connections to occur as you organise them

- there is overhead in managing notes

- ... but it pays for itself by enabling creativity

You might wonder about how to implement this kind of system. Forte favours

something electronic that can run on multiple platforms and share data in the

cloud. That is, a system which is always on, searchable and linkable. But he's

careful to say that (Kindle reference 2962-2963):

... over the years I’ve noticed that it is never a person’s toolset that constrains their potential, it’s their mindset.and (3147-3147):

There is no single right way to build a Second Brain.And that's good because while there's plenty here that's familiar there is also much that's formalised in ways that feel unnatural for me.

Thinking only about my testing interests, I store information in many places.

Right now this includes

- how-to pages in Confluence

- test notes in Confluence

- ... with unpublished data from test notes on my laptop

- a file with miscellaneous notes from meetings I've attended on my laptop

- project-specific folders with notes, data, and so on on my laptop

- reflections in this blog

- ... and ideas for future posts on my laptop

- schematics, mind maps, architecture diagrams, etc in Miro

- numeric and tabular data in Google Sheets

- helper scripts and other tools in Github

- browser bookmarks for stuff I want to reference regularly

- links to videos and other materials for work presentations on my page in Confluence

-

links to videos, slides, and articles for testing material outside this

blog

How I think the information will be used, and who will use it, influences

which "brain" I store it in and the extent to which I will curate it.

For example, how-tos that I hope will be valuable to me and my

colleagues are written straight into Confluence and labelled so that they

group with other related notes. They can be as skeletal as a couple of command

line calls for some occasional activity or as rich as a sequence of steps with

screenshots for some complex interaction with Jira.

We have a good culture in the team of trying to keep this material in step

with our practices and of maintaining a single source of truth. This is a

collaborative brain.

Test notes are written to a text file and

regularly pushed to Confluence. They are "live" in the sense that I write them

as I test, noting down observations, ideas, evidence and so on. They preserve

context for me to return to when I pause testing for some reason. When I've

finished a particular mission, they're published and cross referenced in Jira

tickets, regulatory documents and so on.

Ideas for new projects

and blogs are dropped into a text file per topic and are probably close to the

concept of notes in Building a Second Brain. In the past I've called them

fieldstones, following Jerry Weinberg: I collect them as I go, and when I come to write

a post I often have material in the area that can be shuffled to kick things

off.

For this post, starting content came from Kindle's

highlighting and notes feature and several lists that I dumped into the text

file: places I keep notes, the kinds of notes I keep, the uses that I put

notes to, and other things that I could plausibly class as second-brain

activities.

Some material more naturally belongs in other formats.

I started tracking my team's release lead time from triggering request to

first deployment. Each observation is an atomic event and could be recorded as

a textual note.

But I anticipated being able to do numerical analysis on the data once I had

enough of it, so I structured it in a spreadsheet and, as I learned more about

what kind of data might be available and valuable, I restructured it

iteratively. Like the how-tos, this is public and others now assist with its

maintenance.

Some of my notes are executable. I keep a GitHub repo

of small utility scripts for things that I do more than occasionally. These

are always in flux. As I encounter some new use case, or get fed up with some

friction, I'll extend or split or write a new one. Again, they are public

inside the company and I share them eagerly with others. Sometimes material

migrates out of a how-to, as a set of steps into a script. From one external

brain to another.

A significant difference between these examples

and those in Building a Second Brain is that, for the most part, the material

is working for me and others in something other than note form, yet is still a

searchable, malleable, updateable resource.

It's true that it's not

searchable in a single shot. I can't search Confluence and Google Drive at the

same time, say. But that has not proved to be a major problem. I find that I

use the address bar in my browser as a default starting position. Naming the

material I create in consistent ways can make it discoverable by its name, and

this works across those repositories that are accessed by URL.

But

I don't just outsource knowledge management to another brain. I use outside

brains to help organise and manage my work. I'd be surprised if you don't do

that too with at least some kind of calendar.

I try to make my calendar usage rich: if I'm booking a meeting with someone I

will dump a note on the purpose of the meeting, the points I'd like to cover,

links to any contextual material into the description at the point I make it.

This is a courtesy to them, but also to my future self.

I have

reminders in the calendar too, for stuff that I don't want to forget, such as

putting my mouse and keyboard on to charge at the end of the week. I use Slack

notifications, both personal and for the team to remind us of regular shared

tasks. And I use cron to run regular tasks that can happen unattended.

Since

I started working remotely, I have begun to use a personal kanban board rather

than a to-do list in a paper notebook. This is largely because I can. It's

easier to maintain and visualise the kanban board because I am always in the

same place. When I was in the office, going from meeting to meeting, I needed

something I could carry with me.

I make templates for common

activities in the place they are required, e.g. in GitHub PRs, or Jira

tickets. I find that it is worth crafting these carefully or they are ignored.

They need to be complete enough to contain all of the required information but

concise enough and easy enough to navigate that they don't add inertia of

their own. This is a tricky compromise to get right.

Friction is an

interesting driver for creating tools that take over some of your brainwork.

Just two weeks ago I realised that navigating between test notes directories

was getting tiresome because the directory structure is reasonably deep

(/Users/james/sessions/<YEAR>/<MONTH>/<DATE>_<TITLE>)

so I wrote a couple of little utilities that would give me better access: one

that lists the directories I've created today, and one that lists those I've

created this month.

As these are the most likely places for me to be looking, I can simply type

day or month and get a list that I can copy-paste from. This is good enough

for now. Some friction was removed and I already see benefits.

I

also see that it's not perfect. The naive implementation I used is by calendar

month, rather than the last 30 days. This means that at month boundaries I

lose some of the utility of the tool. Time will tell whether I find that

there's anough friction here that I need to iterate or completely revise the

approach.

Forte notes, quite early on, that (764-765)

Creativity depends on a creative process.and later (1963-1965)

The challenge we face in building a Second Brain is how to establish a system for personal knowledge that frees up attention, instead of taking more of it.

I like to think of myself as reasonably creative and I definitely think that

the way I organise my work, my external brains, assists in that. I did a short

presentation at work this week called Opening Paints Tins With Screwdrivers

where I showed how I'd made an ad-hoc profiling tool by bolting together

existing scripts, a little manual orchestration, and some data manipulation in

Google Drive.

Some of those pieces do not come from a second

brain. Knowing how to do pivot charts for data manipulation is something that

I keep in my first brain. Remembering the steps to connect to a local

Kubernetes cluster is something that I outsource to a script. When I need to

do something other than the very standard steps, I can use the script at the

basis for the variant.

I find it productive to ask this: what shape

is the problem and which of the material in my first or second brains could

fit that shape, in what combinations, or could be used to fabricate something

that fits?

Elephants never forget, they say, and the elephant in this room is Google. It's everyone's second brain and it's harvesting new information all the time without any effort on our part. I exploit Google too, by trying not to archive stuff that I think I can easily discover elsewhere, and will be able to continue to reference in future.

I find this kind of meta-work fascinating and I'm particularly interested to know why I don't ever seem to have suffered from the same kind of serial adoption and rejection of productivity tooling that I've seen from others.

Reflecting on that I wonder if it's because I've generally tried to use tools

that are in the context and iterate them into being something useful for me

rather than try to impose a single approach top-down.

Forte has

found great value in a specific top-down system but it's worth noting that he

also tried many things on his way to it and exercises judgement about what is admitted (913-914):

The best curators are picky about what they allow into their collections, and you should be too.

So if there's a lesson here at all I guess it's that to amplify your

capabilities in a sustainable long-term way, you need to invest in

observation, effort, and judgement. Ironically, perhaps, these are things that

will only come from engaging your first brain.

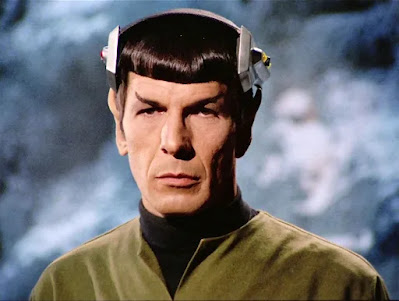

Image:

Memory Alpha