For over a year the roadworks near our house have been a riot of signage, inspiring me and my daughter to make silly songs using their words for lyrics as we walked to school.

Then she got the idea that we should make a "proper" song. So I downloaded n-Track and we hacked together a techno instrumental over a few evenings. Unfortunately, recording decent quality vocals at home without much equipment is non-trvial and then real life intervened anyway so the project stopped.

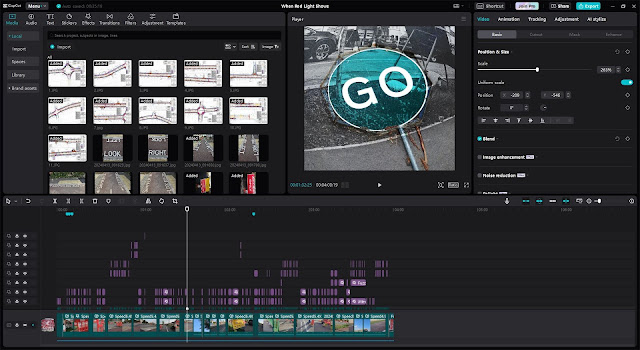

A few months later I came aross Suno, an AI song generator, and had a lightbulb moment. Suno exposes a prompt for musical style and a prompt for lyrics so we had it make a song, When Red Light Shows, based on the signs. I think it was my daughter that suggested we should make a video too, so we did, with a non-AI tool, CapCut.

The lyrics came from signs, the music came from AI, and we made the images yet I feel that we were creative across all of those areas. Reflecting on our experience, the interesting aspects for me are the capabilities that the different kinds of tools afforded us and the roles we took relative to them. This blog post gets some thoughts down about that.

But first here's the song and video:

The initial act of creativity was noticing the opportunity to be creative and finding a way to take it. This was all on us. Go humans!

Choosing to make the lyrics from the road signs could be seen as an abdication of creativity. I don't think of it that way; there are traditions in art that recycle and reinvent for commentary, or to recontexualise, or simply to provoke unexpected and novel perspectives, as famously used by David Bowie. I don't want to intellectualise our efforts so I'd say that we were building from the material around us, for our own amusement. That's what we humans do.

But on a more practical note, simply having a collection of photos of signs does not make a song. Effort and judgement is required to take the raw words and organise them into something that sounds compelling.

Of course, given that Suno "wrote" and "performed" the music, there's clearly no creativity in it, right? Well, in some sense that's correct: we didn't compose or play a note. But we had the vision and we drove the tooling. In particular, prompt engineering and review of the outcomes was a significant part of the process.

This is the style prompt we ended up using:

80s minimal hip hop with primitive scratching, fast 808 beats, dirty bass, male and female rap vocal

and these are some of the variations we tried along the way:

80s minimal hip hop no instruments just beats and bass, primitive scratching, 808s beats fast tempo new york

80s minimal hip hop, primitive scratching, raw beats, fast tempo, roadworks sound effects, two rappers trading lines

80s minimal hip hop primitive scratching, 808s beats fast tempo new york swagger

80s minimal hip hop with primitive scratching, heavy bass, 808s beats and new york rapper vocal

80s minimal hip hop with primitive scratching, fast 808 beats, dirty bass, rap vocal

80s minimal hip hop with primitive scratching, fast 808 beats, dirty bass, twin rap vocals

80s minimal hip hop with primitive scratching, fast 808 beats, massive dirty jungle bass drops, male and female rap

In all honesty I don't know whether I'd say that the sound of our song is what I'd imagine given the prompt but it is a sound that we liked a lot.

While iterating the prompt, we were also tweaking the lyrics to encourge Suno to render them in a way that appealed to us. For example, we found that metatags helped some but were not very reliable, strings of digits such as phone numbers were handled badly, and longer lines tended to get garbled.

This is the start of the final lyrics we used and even here the initial chorus was completely ignored and part of the verse was repeated, but again we liked the rest of the track so we went with it:

[Chorus]

When red light shows wait here

When red light shows wait here

When red light shows wait here

Wait here, when red light shows

[Verse]

Bus Stop Is Now Open

Cycle Lane closed

Cyclists dismount here

To Be Marshalled

Through Works

Cyclists / Pedestrians

Please Wait Here

Temp Bus Stop

Beware of increased traffic levels

Access to shops

Crossing not in use

Pedestrians

For each prompt we'd request four or six tracks (they come in pairs), listen to them, see whether they fitted the vibe that we were looking for and then either generate more variants or tweak the prompt or lyrics, or both, and go again.

Intuitively, we wanted to be having a ChatGPT-like conversation about

the music with Suno through the prompt, but requests like "add funky

melody and make the vocals clearer" were not successful. Whatever

context there is does not appear to carry across generation attempts.

Once we found a track that we liked, we could choose to "extend" it, which creates another track, musically closely related to the first, that could later be glued on. For each extension we repeated the generate-and-test process keeping the prompt static but adding more lyrics and our final song is several tracks stitched together.

Each iteration took around a minute to render so the feedback cycle is not fast and I guess we generated over 100 tracks in total. We didn't like the outro that Suno generated so a final step after downloading the MP3 was to use Audacity to crop it at four minutes.

If you know what you are doing, CapCut is probably quite straightforward and, to be honest, even as a newbie I found its interface pretty friendly and intuitive. Having said that, it's a whole lot more complex than typing a request into a box and waiting a minute for completed work to pop out.

Not knowing what many of the features did mean that we experimented quite a lot to look for effects that we liked. While we might have had the strategic vision, we didn't have a strong idea of the tactics we'd use to realise it.

In that respect, there was a very similar loop to our use of Suno. We'd edit a piece, play it back, change some factor like the visual effects we'd used, or the timing of the effects, or the zoom on the sign, and play again. Generate and test. generate and test, generate, test, generate, test, generate ...

At times, as in any creative process, there was synchronicity that we could take advantage of. For example, there's a beat at 2:20 that fit almost perfectly with a short static image of a traffic light in the video, so we synched them.

This wasn't intended, and if we'd taken a different path it might never have even appeared to us as an option. Should we credit the humans here, or the tool, or luck, or something else?

Comparing our activities with the different materials and applications has been interesting. At a high level I don't see much difference between using CapCut and using Suno: they are both tools that take our input and produce output that we can use in ways that suit us. At lower levels, though, they have strengths and weaknesses along various dimensions such as:

Generativity: Suno can create something from literally no input. Even two empty prompts will create a song that you can iterate on. CapCut is nothing without source material and some direction from the user.

Precision and control: Suno gives no precise control, no guarantee of repeatability, no chance to edit some small aspect of a track. CapCut gives all the control, with numerous parameters for behaviour at all sorts of granularities and it's straightforward to copy a pattern and reapply it in other places.

Startup costs: Suno is trivial to use immediately. CapCut takes effort and time to get into.

Hidden costs: Although there are tricks that can be learned to try to direct Suno more in the direction you want, I'd guess you can't become expert in Suno in the sense that, given a brief, you can know exactly how to realise it. You will likely always pay the "I didn't want that" cost of generate-and-test and you might need to, as we did, post-process the output. I wouldn't expect an investment in learning CapCut to have this problem.

Synchronicity: Both tools offer the chance of happy accidents. The user needs to take more control of the experiments in CapCut but there is enough variability that unexpected outcomes are not hard to come by.

I'm very aware that, in return for the advantages of Suno, we risk falling into Harry Collins's RAT trap where we assign the software unwarranted capability, accept compromises easily, and overlook shortcomings in its functionality and usability. Also, the stakes are extremely low here: no-one dies if Suno messes up the lyrics to our song.

In this scenario particularly, but also to some extent in an analogous project at work where we're building a testing tool that exploits the relative unpredictability of an LLM to help us explore, it is clear to me that the trade-offs are not just technical but also egotistical and social: to what extent is a creation "mine" when I've used tools with a high generativity on it? What do I think about that? What credit do others give me for that?

I think we were pragmatic in our choice of tools. Where our vision required control (video) we used a tool that gave us that control. Where we were happy to cede some control in exchange for output at a quality we could not produce ourselves (vocals) we used a different tool.

I didn't set out to do some kind of AI experiment. Instead I set out

to have some fun with my daughter and found tools that enabled us to produce something, some art, that we're proud of and I'm happy is our work. For sure, we were sometimes more creators and sometimes more curators, but at no point were we ever mere spectators.